Having recently grappled with conducting a UI audit/inventory across a large suite of products, I've struck upon an interesting, light-weight approach for generating an overview of patterns, elements and metaphors across a large portfolio.

// This was originally posted on Medium, generating significant interest from Design System leaders.

Inspired by Nathan Curtis's team activity on Parts, it's a relatively simple process incorporating a Microsoft Forms survey (could be anything similar), a bit of jiggery-pokery in Excel (power-user terminology) and outputting the data in a meaningful way.

I caught a guy next to me on the morning commute rapidly texting a colleague on Slack..

.. this guy next to me is working on a cool design system chart ... Train Guy

Based on this positive, yet indirect, feedback alone I felt it worth writing up...

Context

Elsevier have long been a leading scientific content publisher who, through their many books and journals, have published significant works by scientific luminaries such as Galileo, Darwin and Hawkins.

As well as being a content publisher, they're also establishing themselves as a leading technology provider in the field of scientific research, building digital tools to support researchers in their daily routines.

The organisation is vast and the number of products and projects running through the organisation is mind-boggling.

The Challenge

As part of my role, to establish a unified UI Framework to support the wider design system development, I felt it important to begin with a functional UI audit/inventory.

The teams are distributed across London, Aalborg, Amsterdam, New York and Dayton and the types of products they create cover use cases of deep-reading, content discovery, staying-up-to-date, data management and analytical assessment and evaluation.

Across such a body of products, all moving at different paces, all at different stages of their product lifecycle, we have a wide range of design work occurring on a daily basis and a whole range of nuanced flows, patterns and unique solutions to accommodate.

While we all do our best to surface our work through Slack and periodic showcases, we've no single source of truth for our design work or a common set of design tools that helps us stay on top of what is out there in the wild.

So how do we overcome this?

An approach

As current design systems thinking dictates, Step #1 tends to be the creation of a Visual Inventory.

Generating screens for every single flow and interaction across this suite of products could run us into thousands and would quickly be considered out-of-date given the pace some of the projects were moving at..

As such, we started by requesting just 5 key screens from our 12 key products, using RealTime Board, (now Miro) as a central tool for gathering this material.

This quickly provided us with a bird's-eye of the products in order for us to get a sense of the gaps in continuity/coherence, helping to surfacing some of UI themes for us to prioritise.

Observations

Despite a relatively deep and widely-used set of digital brand guidelines in place, (The Masterbrand) there were clear gaps in our collective approach to applying the guidance.

Whilst we observed a just minor divergence of UI controls due to the well-formed documentation, some subtler aspects of our design, such as spacing, layout and breakpoints we're clearly areas for us to work on in order to bring the portfolio closer .

With very content-heavy interfaces, another area of note to address were the patterns we used to represent key content types such as user profiles, articles, statistics. By recognising these as important visual anchors and holistically tightening up on them, we felt this would help us become more cohesive.

Finally, a hidden theme that we were able to identify through the inventory was a divergence in the concepts and metaphors each team used to design their interfaces.

Common cues such as sharing, favouriting, bookmarking, saving, exporting were being represented in different ways across the products I felt this was something critical to identify as something to move forward with.

Learnings

Despite the observations we could take from this sample inventory, it wasn't the full picture.

Taking this snapshot had clearly surfaced some important themes to circle on but hadn't really given us the full picture of what teams rely on to build out their products.

Asking each team to supply a full set of screens/flows, would likely run into their hundreds, creating yet more raw material to cut up and synthesise.

Throwing down all the screens/elements on an infinite canvas didn't feel like it was going help us become more organised or aware about the extent of our UI needs.

I felt the audit needed to be a little more data-driven to help really demonstrate the scale of our task and help identify shared needs.

Realisation

Whilst the designers believe in (and expect) all the benefits of a well-organised design system, time and opportunities to work holistically in a product-driven environment was limited.

As a result, through no fault of their own, designers were not working enough on common UI solutions just yet.

Of course, there were some groups here and there who had formed alliances, mainly as a result of sharing the same office space but, across the group, there wasn't enough visibility to know if an interface element or flow on their backlog had been researched, designed and validated elsewhere in the business and if so, who to contact.

With this in mind, I felt that it was not only important to not only capture a full inventory of UI elements, but also help to generate an awareness of what each team are reliant on and surface it back to the team in a meaningful way.

Idea

I wanted to ensure the next steps took us deeper and helped to provide the teams with a better snapshot of what's being used where without a) getting them to continually update screens and flows in Miro (which is like herding cats) or b) without me taking on the duty of collating all this myself.

While the visual inventory helped to surface holistic themes, the idea of surveying the landscape rather than collating all instances seemed like it would bare more fruit..

It was this thought that took me back to the 'Parts' workshop/worksheet that Nathan Curtis outlines in his Medium post. A great, simple checklist of UI elements to help tease our what a team should focus on. Of course, with multiple teams in different locations this wasn't something I could sit down and run, but felt the idea could help us.

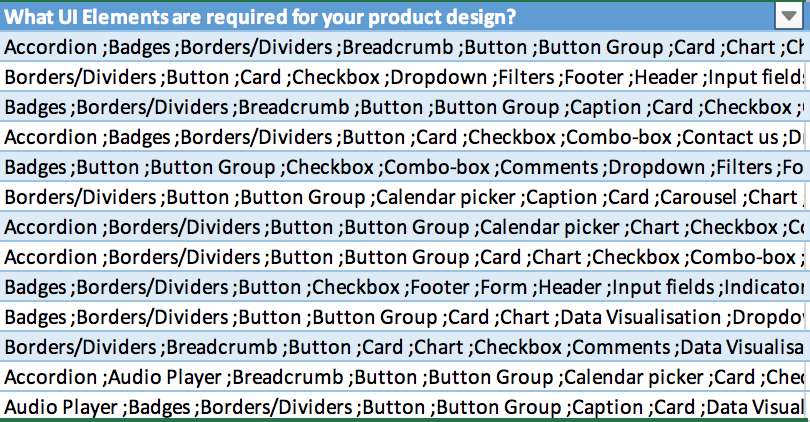

With that in mind I generated 3 surveys, which would take designers no longer than 5 mins each to complete and would provide a matrix across 12 teams of our UI Usage. These were..

- UI Controls

- Concepts/Metaphors

- Content Patterns

Alongside a handful of questions designed to provide insights into the collective knowledge and understanding

- Who are you?(To capture the points of contact)

- What products are you designing for? (To capture the where the designs are being implemented)

- What are the [ UI Controls | Concept | Content Patterns ] you rely on to design your interface? (This is the multiple choice checklist and the key audit data)

- Is there anything that's missing? (A chance to capture gaps in the individual/collective knowledge)

- Are there any terms you're unclear on? (A chance to capture gaps in terminology)

These went out over the course of a couple of weeks and, pretty effortlessly, the responses coming back started to generate an interesting overview of what's happening where.

Transforming the data

The survey was generated using Microsoft Forms, so the output came back into Excel, which was OKish, but not very legible for playing back to the team.

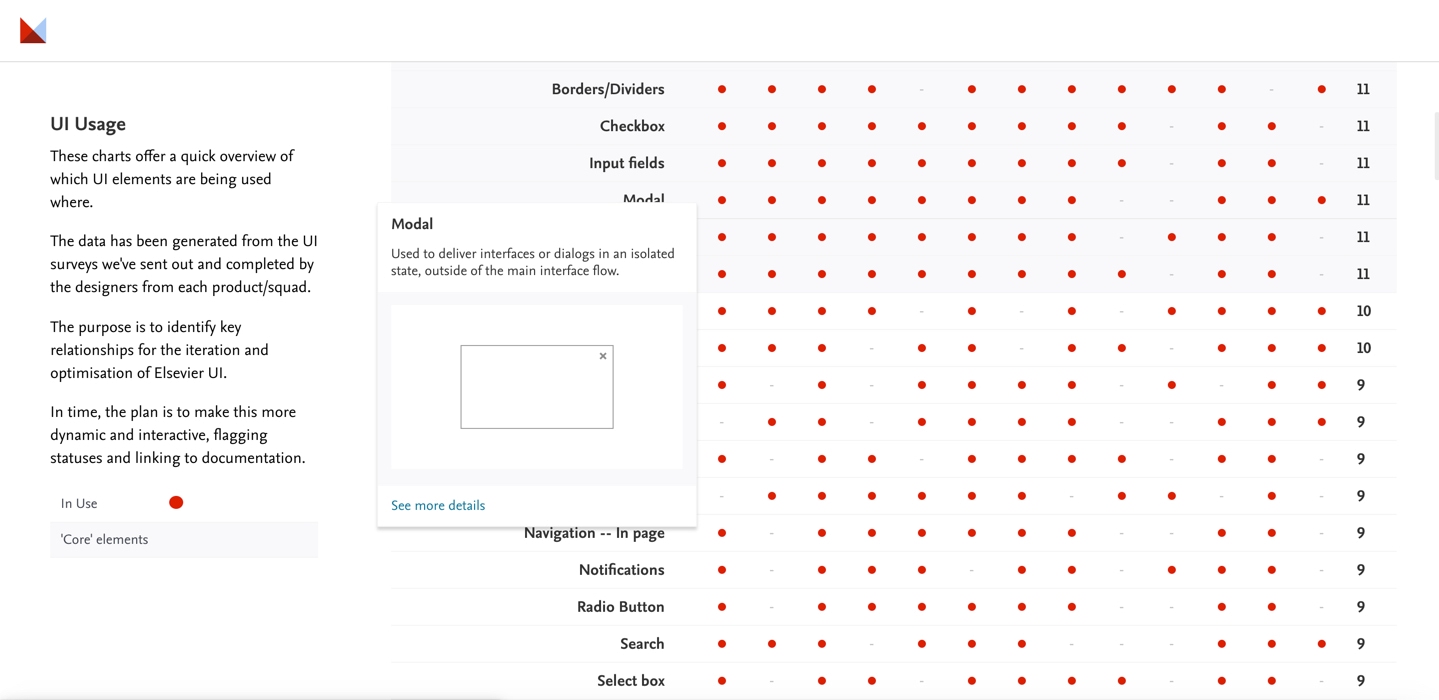

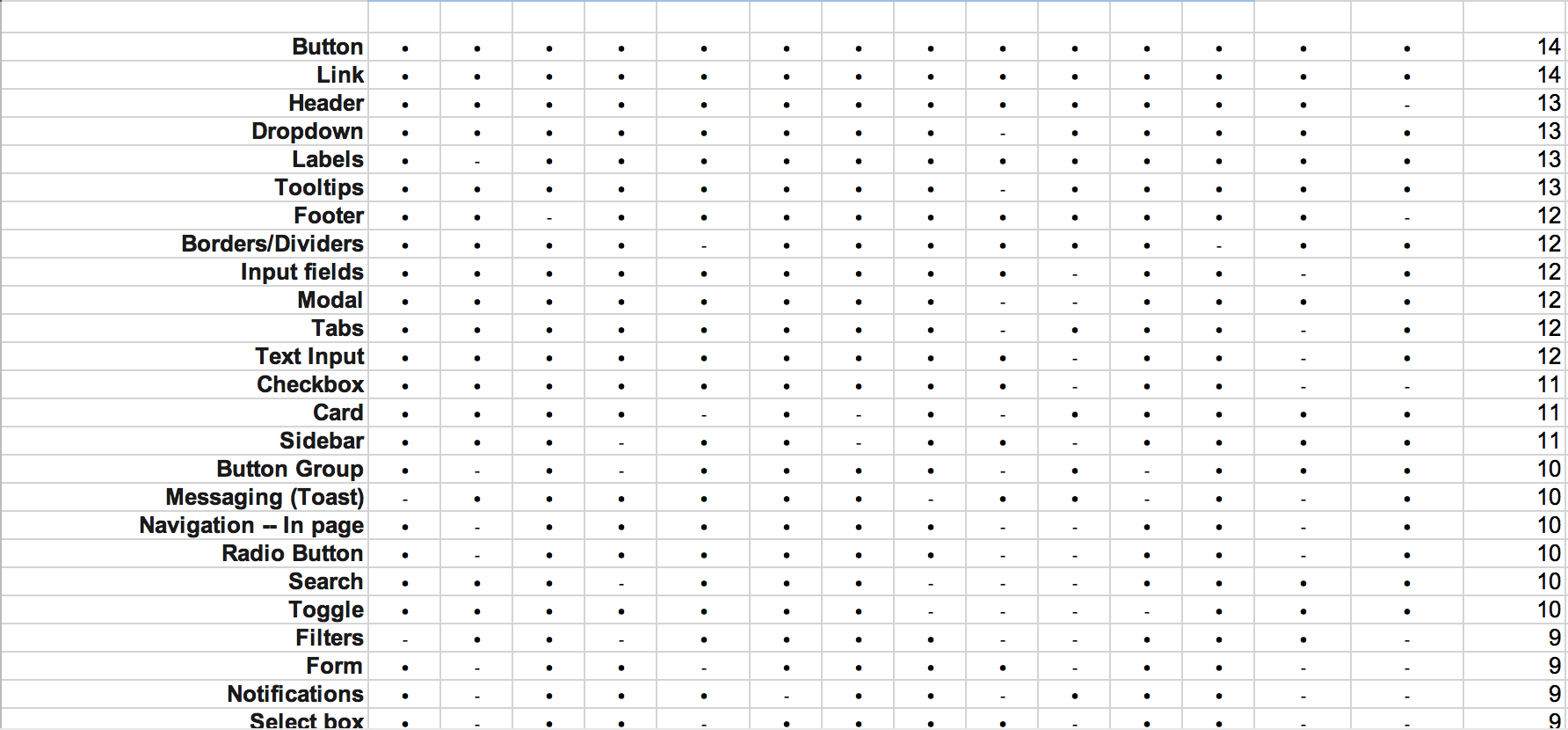

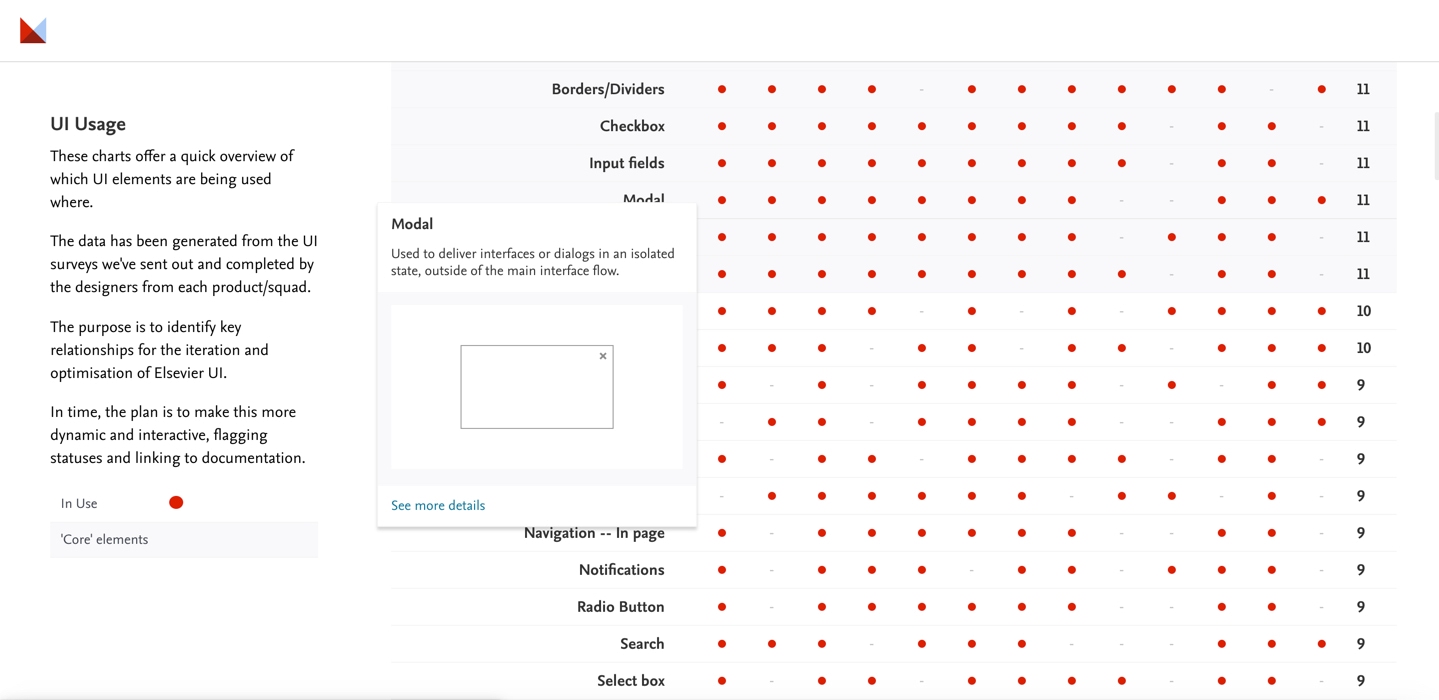

With a little bit of Googling, I figured out how to restructure and sort the raw data into Elements by Team and sorted by Frequency of use, from highest to lowest. This immediately gave a powerful overview which we'd not had before. I then exported this to HTML, and styled the data, dog-fooding some patterns and techniques we're hoping to deploy to the UI Framework in due course.

The Outcome

The results are a pretty insightful overview of the most used elements throughout our products, all wrapped up into chart that now gives us a proxy backlog for the design system work that theoretically could support everyone.

In the interim, it also serves as a directory for teams to better understand who else in the organisation may have thoughts, insights, research or robust solutions to things they may be looking to design, which seems to be a great addition to our toolkit.

Through the output we're able to see at-a-glance the designers that should contribute/approve the iteration and optimisation of our interfaces.

For the business, it's a helpful frame of reference for the magnitude of the task, quantify the effort required to undertake the work.

Next Steps

In time, the plan is to make this more dynamic and interactive, flagging statuses and linking out to documentation as we progress building out our design system proper.

We're still working through how we how we may tackle and consolidate the divergence. The team, the size, the contribution model.

For now, we've broken the back of the task with this simple survey technique.